Industrial systems are generating more data than ever before. Machines, sensors, vehicles, energy systems, and production lines continuously emit streams of telemetry that capture everything from temperature and vibration to operational states and error logs.

This data is incredibly valuable. It powers predictive maintenance, energy optimization, digital twins, and AI-driven operational intelligence. But there is a problem: most traditional databases were never designed to handle industrial IoT data.

Organizations often discover this only after their first large-scale deployment. What starts as a promising analytics initiative quickly turns into a struggle with ingestion bottlenecks, slow queries, rigid schemas, and growing operational complexity.

To understand why this happens, it is important to look at the fundamental characteristics of industrial telemetry data.

1. The Velocity Problem: Massive Data Ingestion

Industrial environments generate data continuously.

A single factory can produce millions of records per hour. A fleet of connected machines or vehicles can easily produce billions of records per day. Each sensor event typically contains a timestamp, device or machine identifiers, multiple measurements, and operational states or metadata.

Traditional relational databases were primarily designed for transactional workloads where records are inserted at moderate rates and frequently updated.

Industrial telemetry is different. It is append-heavy and extremely high velocity. Data arrives continuously from thousands or millions of devices, and it must be ingested without slowing down analytics or operational systems.

When traditional systems attempt to handle this workload, they often struggle with write bottlenecks, index maintenance overhead, ingestion latency, and scaling limitations.

As data rates increase, ingestion pipelines become fragile and expensive to operate.

2. The Schema Problem: Constantly Changing Data

Industrial telemetry rarely follows a perfectly stable schema. New sensors appear. Firmware updates introduce additional fields. Devices from different manufacturers emit different payload formats. Machine learning pipelines may enrich events with new attributes.

As a result, industrial telemetry often arrives as semi-structured JSON payloads whose structure evolves over time.

Traditional relational databases expect schemas to be well-defined and relatively static. Schema changes require migrations, downtime, or complex data transformation processes.

In industrial environments, however, schema evolution is the norm, not the exception. Systems that cannot adapt dynamically force engineers to spend time maintaining data pipelines instead of extracting insights from the data.

3. The Cardinality Problem: Millions of Unique Dimension

Industrial telemetry often contains extremely high-cardinality attributes.

Examples include: device IDs, machine IDs, customer installations, geographic coordinates, session identifiers, production batch numbers.

These dimensions are essential for operational analytics. Engineers need to filter and aggregate data by device, location, production line, or time window.

Traditional analytics systems frequently struggle with high-cardinality dimensions because indexes become large and queries become slower as the number of unique values increases.

This problem becomes particularly acute in scenarios like fleet management platforms, smart energy grids, and global industrial monitoring systems.

As the number of connected devices grows, query performance can degrade dramatically in systems that were not designed for this level of dimensionality.

4. The Query Problem: Real-Time Operational Analytics

Industrial analytics is rarely limited to simple dashboards.

Operations teams need to run complex queries such as:

- aggregations across billions of events

- anomaly detection queries over recent data

- correlation across machines and sensors

- search within operational logs

- geospatial analysis of assets in motion

In other words, industrial telemetry requires a combination of analytics, search, and time-series processing.

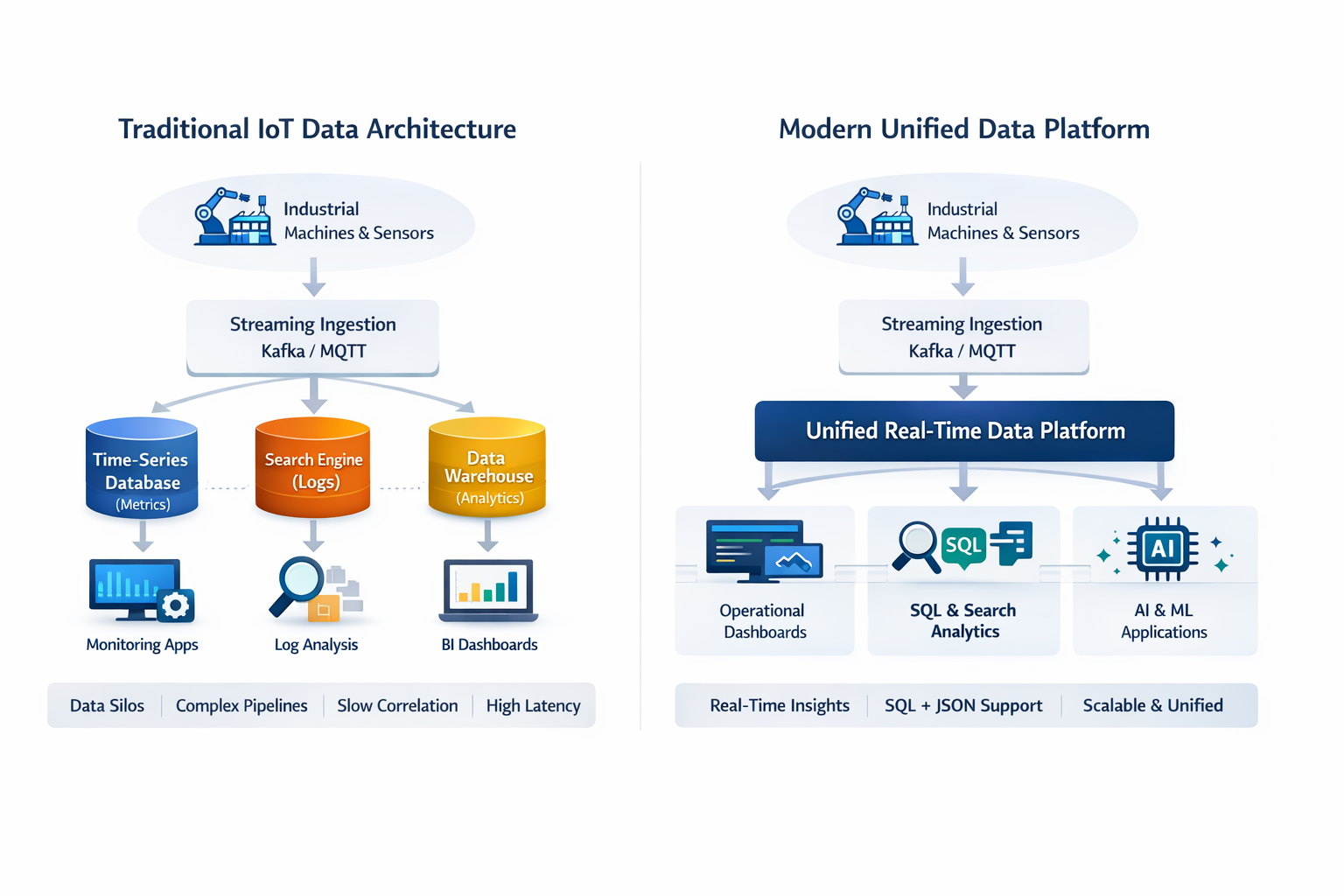

Traditional architectures often split these workloads across multiple systems:

- a time-series database for metrics

- a search engine for logs

- a warehouse for analytics

This fragmentation increases complexity, latency, and operational costs. It also makes it harder to correlate data across systems.

5. The Time Problem: Insights Must Be Immediate

In industrial operations, insights often need to happen in seconds, not hours.

Examples include detecting abnormal vibration in a turbine, identifying energy inefficiencies in real time, spotting failures across thousands of devices, responding to logistics disruptions.

Traditional analytics platforms often rely on batch pipelines and delayed processing. Data may only become queryable after minutes or hours.

For operational use cases, this delay is unacceptable. Systems must support near-real-time ingestion, immediate indexing, and interactive queries on fresh data.

Without these capabilities, analytics becomes backward-looking rather than operational.

The Architectural Gap

When organizations try to run industrial IoT analytics on traditional databases, they encounter a fundamental mismatch between data characteristics and system architecture.

Industrial telemetry requires a platform that can handle:

- continuous high-throughput ingestion

- flexible and evolving schemas

- extremely high cardinality

- real-time analytical queries

- unified handling of structured and semi-structured data

Many traditional systems were not built for this combination of requirements.

Rethinking the Data Platform for Industrial Systems

To support modern industrial analytics, organizations increasingly look for platforms that combine several capabilities in a single architecture:

- distributed, shared-nothing scalability

- real-time ingestion pipelines

- dynamic schema support

- SQL analytics across large telemetry datasets

- unified handling of time-series, JSON, search, and vector data

These capabilities allow organizations to analyze operational data as it is generated, rather than hours or days later.

As industrial systems continue to digitize and AI-driven operations become more common, the ability to analyze high-velocity telemetry in real time is becoming a foundational requirement.

Industrial IoT data requires a database built for high-velocity telemetry, real-time analytics, and evolving data structures.

CrateDB was designed specifically for these workloads, combining high-throughput ingestion, SQL analytics, and search across structured and semi-structured data in a single distributed platform.

If you're building systems that need to analyze billions of events in real time, learn how CrateDB simplifies industrial analytics architectures.