You Have the Sensor Data.

Your Analytics Run on Last Night's Export.

CrateDB connects to your existing OT stack and runs OEE, predictive maintenance, and cross-plant queries on live sensor data. Standard SQL. No historian replacement required.

The data is there. The analytics are not.

Your historians and PLCs ingest without issue. The problem shows up when you need answers.

Traditional time-series databases handle simple metric ingestion at low cardinality well. They degrade when you add dimensions — device types, plant locations, shift codes, production runs. Every new series becomes a separate storage structure. The more context you add, the slower the queries get.

The result: your operations team waits for reports updated at midnight. Your data engineers maintain two systems instead of one.

Where traditional systems fall short

- Slow calculations: OEE that takes minutes instead of milliseconds. Your shift ends before the report loads.

- Cross-system joins: Root-cause queries that require joining sensor data with ERP downtime codes across a second system — manually.

- Schema fragility: Every new sensor type triggers a pipeline migration. Adding context to your data makes everything slower.

Connect to your OT stack. Query the moment data arrives.

Connect without replacing

CrateDB connects to your existing industrial sources via Telegraf — OPC-UA, MQTT, SCADA, and historian outputs. Your PLCs, historians, and SCADA infrastructure stay in place.

Index everything on ingestion

CrateDB indexes every field automatically on ingestion. No manual index management, no batch loading step, no DBA required.

Standard SQL, existing tools

Write SQL with joins, CTEs, window functions, and aggregations. Your BI tools connect via the PostgreSQL wire protocol — no new query language.

Dynamic columns

OEE root-cause analysis.

On data that just arrived.

Standard SQL. No proprietary functions. No pre-aggregation. The data is live.

/* Based on IoT devices reports, this query returns the voltage variation over time

for a given meter_id */

WITH avg_voltage_all AS (

SELECT meter_id,

avg("Voltage") AS avg_voltage,

date_bin('1 hour'::INTERVAL, ts, 0) AS time

FROM iot.power_consumption

WHERE meter_id = '840072572S'

GROUP BY 1, 3

ORDER BY 3

)

SELECT time,

(avg_voltage - lag(avg_voltage) over (PARTITION BY meter_id ORDER BY time)) AS var_voltage

FROM avg_voltage_all

LIMIT 10;

+---------------+-----------------------+

| time | var_voltage |

+---------------+-----------------------+

| 1166338800000 | NULL |

| 1166479200000 | -2.30999755859375 |

| 1166529600000 | 4.17999267578125 |

| 1166576400000 | -0.3699951171875 |

| 1166734800000 | -3.7100067138671875 |

| 1166785200000 | -1.5399932861328125 |

| 1166893200000 | -3.839996337890625 |

| 1166997600000 | 9.25 |

| 1167044400000 | 0.4499969482421875 |

| 1167174000000 | 3.220001220703125 |

+---------------+-----------------------+

/* Based on IoT devices reports, this query returns the voltage corresponding to

the maximum global active power for each meter_id */

SELECT meter_id,

max_by("Voltage", "Global_active_power") AS voltage_max_global_power

FROM iot.power_consumption

GROUP BY 1

ORDER BY 2 DESC

LIMIT 10;

+------------+--------------------------+

| meter_id | voltage_max_global_power |

+------------+--------------------------+

| 840070437W | 246.77 |

| 840073628P | 246.69 |

| 840074265G | 246.54 |

| 840070238E | 246.35 |

| 840070335K | 246.34 |

| 840075190M | 245.15 |

| 840072876X | 244.81 |

| 840070636M | 242.98 |

| 84007B113A | 242.93 |

| 840073250D | 242.28 |

+------------+--------------------------+

See it for yourself in under 30 minutes

Examples of AI workloads in production

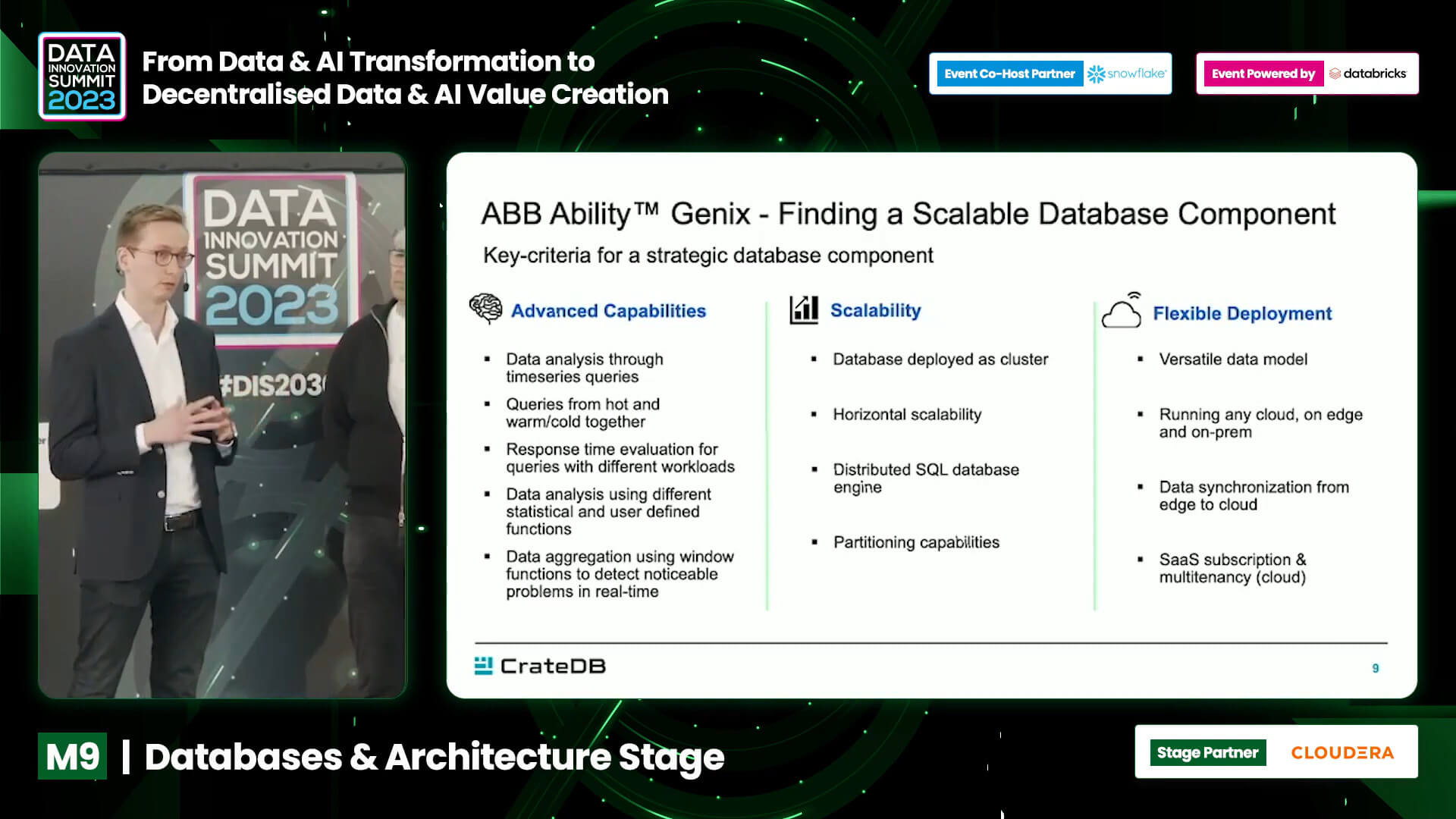

ABB's OPTIMAX® Cloud for Smart Charging is a state-of-the-art power management system designed for EV charging stations and heavy vehicle depots in the logistics and bus industries. It provides smart load management for ABB and non ABB EV chargers, and integrates with external assets like battery storages, PV systems, and interfaces to grid operators’ systems.

"CrateDB is a critical piece of our OPTIMAX® Cloud platform. Its ability to handle vast amounts of time-series data from diverse sources, while delivering real-time insights, has allowed us to scale our operations seamlessly. With CrateDB, we’ve empowered our customers with smarter energy management, reduced costs, and supported a more sustainable future."

Christian Kohlmeyer

Product Owner Mobility & Sites

ABB

Using CrateDB, TGW accelerates data aggregation and access from warehouse systems worldwide, resulting in increased database performance. The system can handle over 100,000 messages every few seconds.

"CrateDB is a highly scalable database for time series and event data with a very fast query engine using standard SQL".

Alexander Mann

Owner Connected Warehouse Architecture

TGW Logistics Group

"With CrateDB, we can continue designing products that add value to our customers. We will continue to rely on CrateDB when we need a database that offers great scalability, reliability and speed."

Nixon Monge Calle

Head of IT Development and Projects

SPGo! Business Intelligence

Resources

Additional resources

See it for yourself in under 30 minutes

FAQ

Streaming analytics refers to real-time processing and analysis of data as it flows in (e.g. from sensors, logs, events). It allows organizations to detect anomalies, trigger actions, and make decisions immediately, rather than relying on batch processing that introduces latency.

CrateDB is engineered for scalable ingestion from sources such as Kafka, MQTT, CDC streams, and logs. It features automatic indexing and distributed architecture that let it scale horizontally and sustain large throughput while serving sub-second queries.

Yes, CrateDB supports native SQL. You can query streaming and time-series data using familiar SQL semantics (aggregations, windowing, joins) without needing to learn a proprietary query language.

CrateDB supports flexible schemas, allowing you to ingest semi-structured data (e.g. JSON fields) and evolve your schema over time. This makes it easier to adapt as new sources and fields emerge.

It’s particularly effective in scenarios such as IoT (sensor monitoring), log and event analytics, anomaly detection, fleet/transport monitoring, real-time dashboards, AI/ML feature pipelines, and any system requiring near-instant insight from high-volume continuous data.